DreamID-V open-sources high-fidelity video face swap

ByteDance and Tsinghua release a diffusion transformer pipeline with faster and more stable pose options, plus practical scripts for 480p and 720p generation.

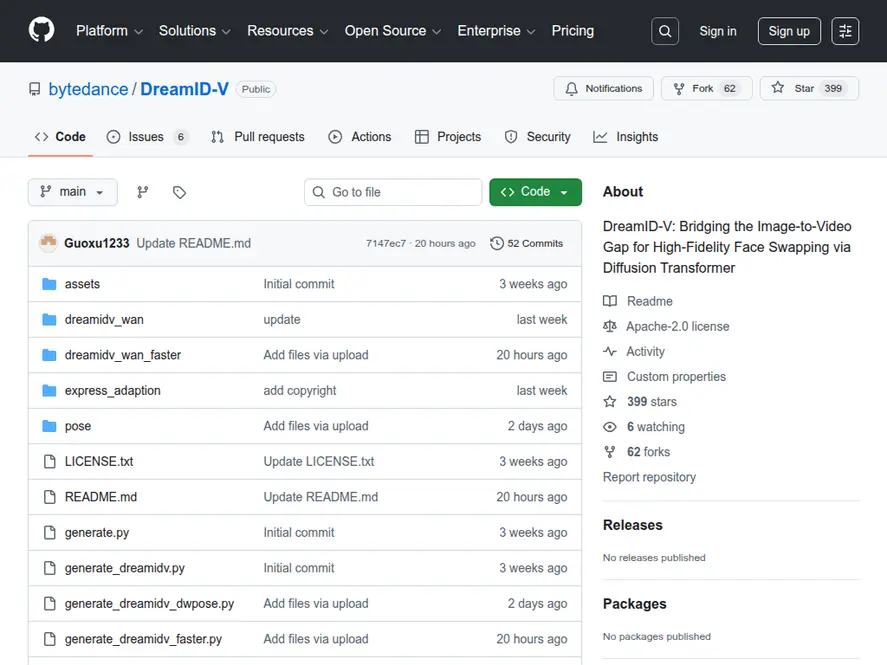

Overview

DreamID-V is an open-source video face swapping model from the Intelligent Creation Team at ByteDance and researchers at Tsinghua University. Released under the Apache-2.0 license, the project focuses on high-fidelity identity preservation when transferring a face from a reference image into a generated video.

The repository positions DreamID-V as a bridge between image identity conditioning and video generation by using a diffusion transformer pipeline. Practically, that means developers can start from a cropped face reference image and produce a video where the target identity remains consistent across frames, while motion and scene dynamics are handled by the underlying text-to-video backbone.

What is being released

The maintainers have published code, a paper (arXiv:2601.01425), and downloadable model weights. The project integrates with Wan2.1-T2V-1.3B components (VAE and text encoder), making the Wan2.1-T2V-1.3B video generation backbone a key dependency in the overall pipeline, and provides multiple inference entry points for different performance and stability tradeoffs.

Key recent updates in the repository include:

- DreamID-V-Wan-1.3B-Faster (01/12/2026): improved inference speed and lower VRAM usage

- DreamID-V-Wan-1.3B-DWPose (01/10/2026): more stable pose detection and more robust pose extraction

- Community integrations for node-based workflows, including ComfyUI support for node-based workflows and a 16 GB VRAM focused version

Key features for builders

Identity-focused workflow

DreamID-V is designed around a simple but strict input requirement: a cropped face reference image (recommended 512x512). This aligns the pipeline with identity preservation first, rather than trying to infer identity from full body frames.

For a commercial point of comparison on identity and consistency, see how other platforms are approaching advanced character reference capabilities in video generation.

Multiple pose backends

Face swapping in video often fails when pose tracking is noisy. DreamID-V addresses this by shipping different inference scripts:

- MediaPipe-based pipeline for general usage

- DWPose-based pipeline for improved stability in pose estimation

Performance options: Faster variant

The Faster checkpoint and script target lower VRAM usage and improved throughput, which is valuable if you are deploying prototypes on a single GPU or iterating quickly.

Developer-ready scripts

The repo includes runnable Python scripts such as:

generate_dreamidv.pygenerate_dreamidv_dwpose.pygenerate_dreamidv_faster.py

It also documents multi-GPU execution with torchrun, using FSDP and xDiT USP via xfuser.

Impact for developers

DreamID-V is notable because it packages a face swapping workflow around a modern video generation backbone with clear knobs for:

- Resolution targets: supports 480p and 720p, with a best-quality recommendation of 1280x720

- Sampling steps: guidance suggests reducing to 20 steps for simpler scenes to cut latency

- Hardware scaling: single-GPU scripts plus multi-GPU paths for teams experimenting with distributed inference

For founders and platform teams, the Apache-2.0 license reduces friction for experimentation and internal demos, while still requiring careful policy and user safeguards.

Practical setup notes

To run the DWPose or Faster pipelines, the repository expects pose models in a specific directory layout:

pose/models/dw-ll_ucoco_384.onnxpose/models/yolox_l.onnx

Dependencies are managed via requirements.txt, with a clear constraint: PyTorch 2.4.0 or newer.

Ethics and responsible use

The project includes an explicit ethics statement: it is intended for academic research and technical demonstrations. It prohibits illegal or harmful usage and recommends labeling outputs as AI-generated to reduce misinformation risk. If you plan to ship this capability, you will likely need additional controls such as consent checks, watermarking, audit logs, and abuse detection.

Bottom line

DreamID-V gives developers a practical, scriptable, open-source route to high-fidelity video face swapping with two important engineering upgrades: a Faster variant for efficiency and a DWPose variant for more reliable motion conditioning. For teams building creative apps, avatar systems, or internal media R and D pipelines, it is a strong reference implementation, as long as it is paired with serious safety and consent tooling.

Discover more cutting-edge AI apps and apps on Appse, your go-to directory for the latest AI innovations.

Source: DreamID-V (GitHub repository)