Alibaba Releases MAI-UI for Agent-to-UI Workflows

A new open-source framework aims to standardize how AI agents generate and control user interfaces for end-to-end tasks.

Video placeholder: https://www.youtube.com/watch?v=q_GwdwSilIA

Overview

Alibaba has released MAI-UI, a new agent-to-user interface (Agent-to-UI) framework intended to help developers build applications where an AI agent can plan work, surface UI states, and trigger actions as part of a single workflow.

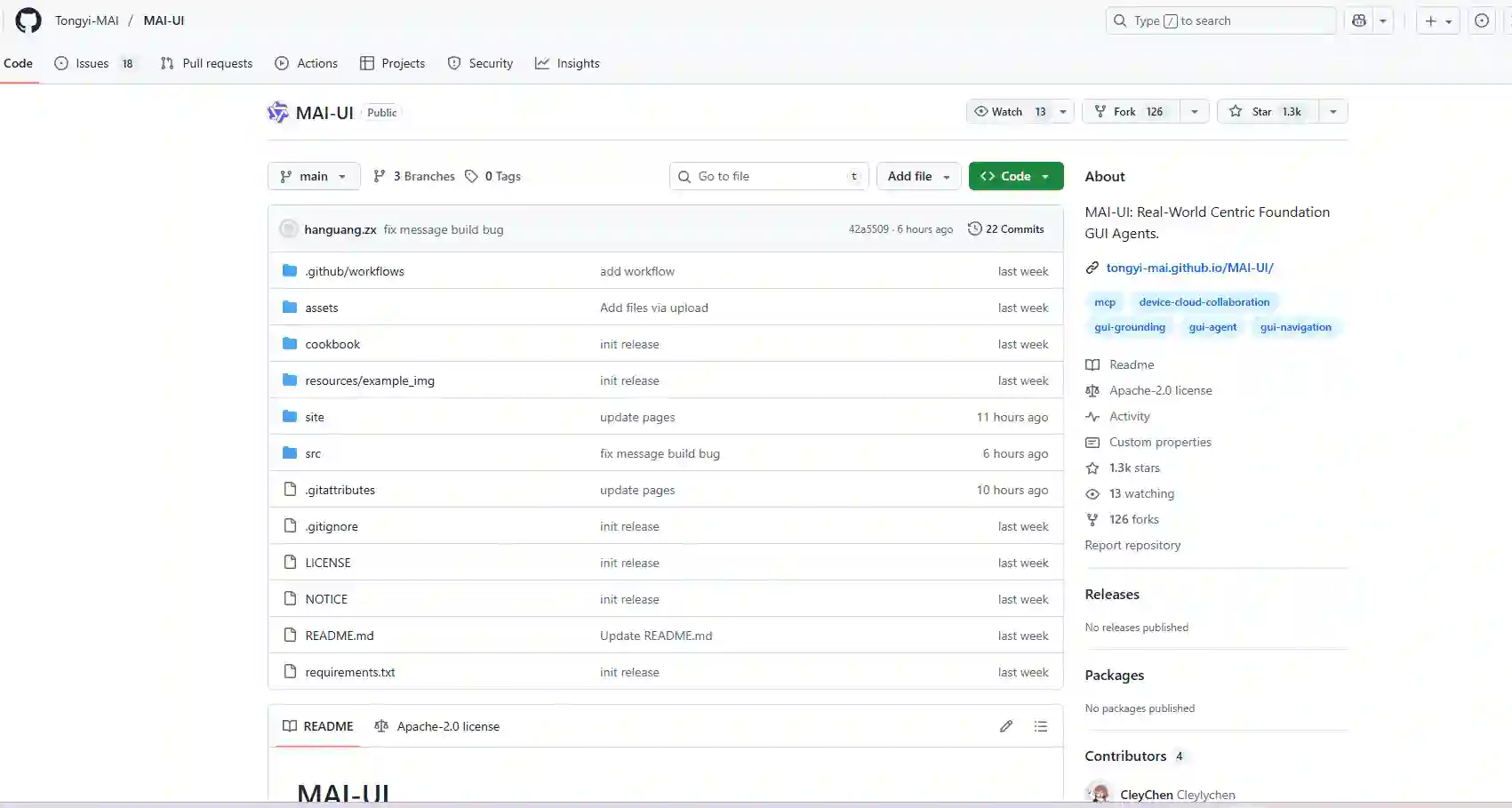

The project is published as open source on GitHub under the alibaba/MAI-UI repository. At the time of writing, the source page provided was returning a GitHub error view, so some implementation specifics (exact APIs, supported runtimes, or licensing details) were not visible in the supplied excerpt. The core positioning, however, is clear from the repository name and release title: MAI-UI is meant to connect agent reasoning and execution with UI presentation and user control.

What “Agent-to-UI” means in practice

In many LLM apps, developers end up building two separate systems:

- An agent loop that decides what to do next (tool calls, function execution, planning)

- A UI layer that tries to keep up with what the agent is doing (status, confirmations, previews)

An Agent-to-UI framework focuses on the seam between those layers: how an agent communicates intent and state to the interface, and how the interface sends feedback, approvals, or corrections back to the agent.

This is also where commercial and ecosystem tooling can complement an open framework. For example, AI-powered UI rendering platforms like C1 by Thesys focus on turning agent or LLM outputs into interactive UI components (such as tables, forms, and charts) that can be embedded into product experiences.

Key Features (based on the release intent)

While detailed documentation could not be verified from the provided source snapshot, MAI-UI’s stated purpose suggests it targets common building blocks developers need for agent-first products:

- Structured agent state to UI rendering so users can see what the agent is doing and why

- Action and approval steps to keep humans in the loop for risky operations

- UI-friendly outputs such as intermediate results, previews, and task progress

- Workflow consistency across apps, steps, and agent decisions

As adjacent context, the broader ecosystem is also moving toward AI-powered UI generation and interactive prototyping capabilities, which can make it easier to create and iterate on the UI surfaces that agent workflows depend on.

Why this matters for builders

Agent experiences often break down at the UI boundary. Users lose trust when they cannot:

- Understand what the agent is doing

- Inspect intermediate artifacts

- Stop or revise a step

- Confirm destructive actions

A dedicated framework can reduce the amount of custom glue code needed to translate agent events into UI updates. For teams shipping to production, that can also mean:

- Fewer “black box” agent interactions

- More debuggable flows (clear step boundaries and states)

- Better safety controls (approval gates, visibility into side effects)

Impact for developers and product teams

If MAI-UI delivers on its Agent-to-UI goal, it can help teams building:

- Autonomous assistants for operations, customer support, or internal tooling

- Dev tools where agents generate code changes and show diffs before applying

- Data and analytics copilots that build queries and visualize results iteratively

- Workflow automation with human review checkpoints

In these products, the UI is not just a chat window. It becomes a control surface for planning, execution, and verification.

Practically, many teams will pair an Agent-to-UI layer with orchestration frameworks and agent runtimes. If you are exploring multi-agent platforms such as CrewAI, MAI-UI-style patterns can help make complex agent handoffs more visible and controllable in the interface.

This direction also aligns with a recent shift toward visual workflows and design-focused AI apps, where users guide, review, and adjust AI-driven work through richer UI states rather than pure chat.

How to evaluate MAI-UI in your stack

Given the limited source details in the provided capture, a practical evaluation checklist for teams is:

- Does it expose a clear event or state model for agent steps?

- Can you add confirmations and rollback-friendly patterns for risky actions?

- Does it work with your agent runtime (tool calling, function execution, streaming)?

- Can your UI team theme, extend, and instrument it (logs, analytics, tracing)?

What to watch next

As the repository documentation becomes accessible, developers should look for:

- Reference demos that show real “agent step to UI” patterns

- Compatibility notes for popular LLM providers and agent frameworks

- Guidelines for safe actions, approvals, and audit trails

For builders shipping agentic apps globally, MAI-UI is a signal that the industry is moving from chat-only interfaces to structured, inspectable, and controllable agent experiences.

Discover more cutting-edge AI apps and apps on Appse, your go-to directory for the latest AI innovations.

Source: alibaba/MAI-UI on GitHub